RCS Campaign Ops

Launch better RCS journeys with zero guesswork.

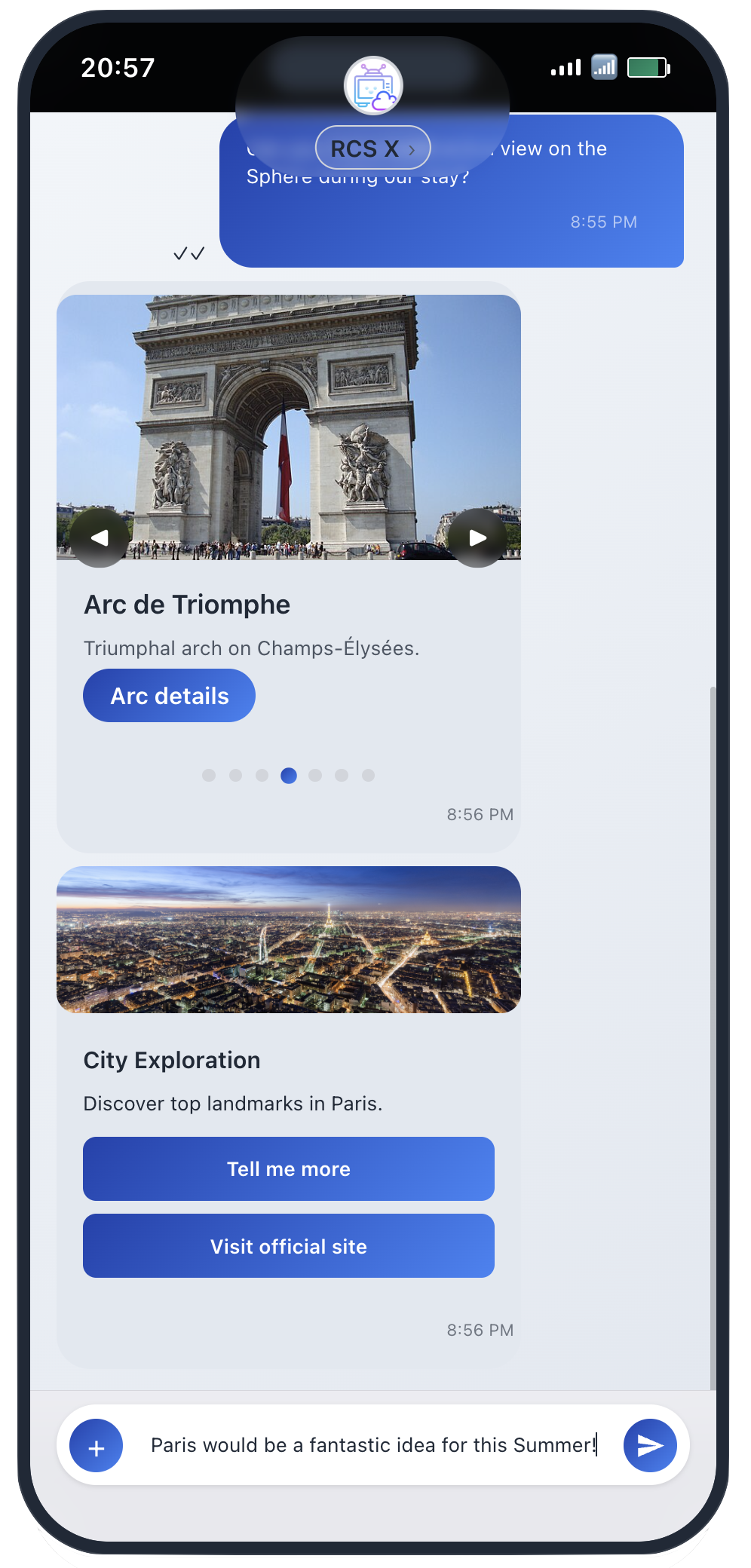

RCSX gives developers and marketing teams a production-like iPhone emulator, event console, and payload validator in one focused workflow. Prototype faster, test deeper, and ship with confidence.

- Schema validation

- Real-time callbacks

- High-fidelity iPhone preview

No whitelisting needed.

Built for Every RCS Team

One platform for campaign strategy, payload implementation, and enterprise-grade validation workflows.

Prototype high-converting campaigns

Preview rich cards, media, and conversation journeys before launch. Iterate messaging fast without production waits.

Validate payloads and callbacks

Use JSON validation, simulator rendering, and event visibility in one loop to catch issues before release.

De-risk rollout with test coverage

Standardize QA across teams, reduce campaign errors, and launch high-volume programs with more confidence.

From Payload to Event Signal

RCSX compresses the build-and-verify loop into three deterministic steps so teams can move from idea to validated campaign without waiting on carrier cycles.

Compose

Draft or paste your RCS payload and validate schema instantly.

Render

Preview the exact conversation in a high-fidelity iPhone simulation.

Observe

Capture engagement and callback signals in real time for QA and optimization.

Simple Usage-Based Plans

Start free, then scale by message throughput and team size. No lock-in, no hidden setup tier.

Most teams start with Pro and upgrade when throughput grows.

Free

$0

1 seat

10 msgs/day

100 msgs/month

Pro

$10 /month

1 seat

100 msgs/day

1,000 msgs/month

Team

$30 /month

5 seats

1,000 msgs/day

10,000 msgs/month

Enterprise

$200 /month

Unlimited seats

10,000 msgs/day

100,000 msgs/month

Common Questions

Answers to the key decisions teams make before adopting RCS testing infrastructure.

What is RCSX?

An RCS emulator for developers and campaign teams to test business messaging flows before production.

How does the iPhone emulator help?

It provides a realistic mobile rendering surface so teams can validate conversation quality and interaction behavior before launch.

Can I test cards and carousels?

Yes. Rich cards, carousel layouts, media payloads, and interactions are supported.

Is API automation supported?

Yes. Your backend and QA scripts can trigger sends and validate flows programmatically.

Can teams collaborate on QA?

Yes. Product, marketing, and engineering can review the same flows and verify behavior before a campaign goes live.

How are authentication and API keys handled?

Dashboard sessions use JWT authentication, and message APIs use scoped keys with an rcs_* prefix for secure operations.

Can I test user interactions?

Yes. Suggested actions, quick replies, and click flows can be exercised end to end with callback visibility.

Is there a free tier?

Yes. You can start at no cost and upgrade as throughput and collaboration needs grow.

Ready to test your next campaign with confidence?

Set up in minutes, validate rendering, and ship with fewer surprises.

From the RCS X Blog

Release notes, architecture decisions, and feature deep-dives from the team shipping RCS testing infrastructure.

RCS vs WhatsApp Business: The Ecosystem Gap in 2026

We ran data across 10 platforms — GitHub, Reddit, Stack Overflow, Hacker News, CPaaS documentation, and more. The gap is real across every dimension. Here's what the numbers actually show.

We spent the morning running actual data. Here's what 10 verified data points across 12 months actually show.

The Paradox

26% of brands are already sending RCS. Traffic grew 550% in 2024. Tells just got approved for US RCS Business Messaging. Apple now supports RCS. Google has been all-in for years.

And yet — find a public community forum where RCS is being actively discussed, and you hit a wall almost immediately.

We decided to test whether the conversation gap was real — or just our perception. We ran data across 10 platforms over a 12-month lookback period. Here's what we found.

1. GitHub: Developer Activity

Data source: GitHub REST API, March 2026

| Metric | WhatsApp Business | RCS |

|---|---|---|

| Total repositories | 748 | 88 |

| Top repo stars | 472 | 14 |

| Official vendor SDKs | 6+ languages | 1 (Java, Google) |

WhatsApp Business has an official SDK from Meta (and WhatsApp), maintained by both Meta engineers and the open-source community. The top repository — WhatsApp's own setup scripts — has 472 stars.

RCS GitHub activity splits into two buckets: ~88 general RCS repositories (mostly consumer/personal RCS projects), and ~18 specifically for RCS Business Messaging. The top RCS Business Messaging repository is Google's Java client library, with 14 stars.

More telling: WhatsApp repositories show continuous updates throughout 2025–2026. RCS repositories show dead periods — some haven't been updated in 12+ months.

2. Reddit: Community Size and Activity

Data source: Reddit REST API, March 2026

| Metric | WhatsApp Business API | RCS Messaging |

|---|---|---|

| Community members | 4,594 | 4 |

| Posts (2026) | Multiple per week | ~1 per 12+ months |

r/WhatsappBusinessAPI has 4,594 members and regular new posts. r/RCSMessaging has 4 members and almost no activity.

The most recent RCS post in that community? A German-language post about European retail brands using RCS — Kaufland and mömax. The geographic concentration of RCS community conversation appears to be Europe, specifically Germany. That's a meaningful signal about where the public RCS conversation is actually happening.

3. Stack Overflow: Developer Q&A

Data source: Stack Exchange API, March 2026

WhatsApp Business has active threads on Stack Overflow as of this week — implementation questions with detailed answers, dozens of views per question.

RCS: one question found, last active in 2022. The title: "How to Hello World RCS Messaging?"

That's not a question people ask when there's a mature ecosystem. It's a question people stop asking because there's nowhere to get an answer.

4. Hacker News: Developer News Mentions

Data source: HN Algolia API + site search, March 2026

WhatsApp appears on Hacker News regularly — via the WhatsApp blog, which posts feature announcements and product updates that the HN community engages with.

RCS: no dedicated Hacker News thread found. No RCS-specific community on HN.

The WhatsApp conversation on HN is developer-interest driven. The RCS conversation in public tech communities is essentially nonexistent.

5. CPaaS Platform Documentation Depth

Data source: Direct documentation review, Twilio.com and Vonage.com, March 2026

This one is subtle but important. Both Twilio and Vonage have RCS documentation — it exists. But:

Twilio WhatsApp:

- Dedicated full documentation section

- Verify API integration

- Conversations API support for WhatsApp

- Multiple sample applications

- Video walkthroughs and tutorials

- Community forum links

Twilio RCS:

- Documentation exists — but secondary placement

- No Conversations API integration

- No video tutorials

- One official sample application (Java RBM capability check)

Vonage shows the same pattern. CPaaS platforms invest documentation resources where developer demand exists. The RCS docs exist because RCS exists as a product — but the tutorial depth, sample code, and community infrastructure that would indicate developer demand isn't there yet.

6. Blog Content Volume: CPaaS and Industry

Data source: Direct review of Twilio, Vonage, Bandwidth, and Sinch blogs, March 2026

WhatsApp Business content from CPaaS platforms is tutorial and case-study focused — "how to build this," "how we helped this brand."

RCS content from the same platforms is carrier and enterprise-announcement focused — partnerships, approvals, new market entries.

These are different audiences. Different conversations. The WhatsApp content is developer and marketer-facing. The RCS content is carrier and enterprise-partnership-facing.

7. AI Agent Marketing Workflows: WhatsApp Business Today

Data source: Web search + platform review, March 2026

This is where the gap becomes most concrete for marketers specifically.

On WhatsApp Business, you can do this today:

- Meta AI agent APIs — official integration path, maintained by Meta

- Twilio Autopilot — WhatsApp-supported AI routing, with documented tutorials

- Vonage AI Studio — WhatsApp templates pre-built

- Open-source AI agent templates — multiple on GitHub with active maintenance

- Agencies offering WhatsApp AI agent implementation as a service — multiple, with published case studies

- "How I built this" posts — found multiple, with detailed technical walkthroughs

The equivalent on RCS:

- No central AI agent API — each carrier implements differently, no standard integration path

- No community-shared implementation templates

- No "how I built this" posts

- Minimal developer talent pool — most RCS expertise is carrier-side, not brand-side

For a marketing team trying to understand what AI-powered RCS customer engagement would look like, there is no public playbook to reference. For the same team evaluating WhatsApp Business, there are templates, agencies, tutorials, and case studies.

8. What RCS Would Require

The honest picture for marketers evaluating RCS:

The native capabilities are richer than WhatsApp — branded sender profiles, rich cards, carousels, suggested replies, read receipts, all inside the default Android messaging app (and now iOS 18). No app download required.

But the operational reality is different:

- No standard AI agent API — you're working with individual carriers, each with their own integration requirements

- No established template library — you're building from scratch

- Testing gap — no reliable way to validate rich media rendering across devices and carriers before launch

- Brand/carrier approval process — adds friction to rapid iteration

- Developer talent pool — minimal compared to WhatsApp Business

This isn't a technology gap. RCS has superior native capabilities. It's an ecosystem infrastructure gap — the tooling, shared learnings, and community that make a technology practical to operate at scale don't exist yet.

9. Brand Case Studies and Marketing Campaigns

Data source: Web search + LinkedIn hashtag review, March 2026

Meta has an extensive library of WhatsApp Business case studies — brands of all sizes, documented ROI, campaign results shared on LinkedIn regularly.

Google has sparse RCS case studies — mostly India-focused (Airtel's anti-spam collaboration with Google) and German retail brands.

On LinkedIn, #WhatsAppBusiness generates regular posts from marketers sharing campaign results, lessons learned, and agency recommendations. #RCS posts are mostly carrier announcements and vendor posts.

The conference circuit tells the same story. WhatsApp Business appears at developer and marketing conferences — where the audience is practitioners. RCS appears at carrier and enterprise conferences — where the audience is carriers and partners.

10. The Two Conversations

Data source: All 10 platforms aggregated, March 2026

| Platform | WhatsApp Business | RCS |

|---|---|---|

| GitHub repos | 748 | 88 |

| GitHub top stars | 472 | 14 |

| Reddit members | 4,594 | 4 |

| Stack Overflow | Active | Zero new questions since 2022 |

| Hacker News | Regular mentions | None |

| CPaaS tutorial depth | Extensive | Minimal |

| AI agent ecosystem | Mature | Nascent |

| Community forums | Active | Nearly dormant |

| Brand case studies | Extensive | Sparse |

| Carrier momentum | Meta-owned | Real, carrier-controlled |

The pattern: RCS success is real at the carrier and enterprise level. The public community conversation is near-nonexistent. These two facts are not contradictory — they're measuring different things.

What the Gap Actually Means

The RCS conversation isn't small because the opportunity is small. It's small because the ecosystem is.

Two things can be true simultaneously:

Interpretation A: RCS is succeeding quietly in controlled carrier-to-brand channels — the public conversation hasn't caught up because the real action is in carrier boardrooms, not developer forums.

Interpretation B: Early adopters are hitting friction — carrier inconsistency, iOS limitations, immature testing tools, no shared learnings — and giving up quietly rather than sharing what they've learned.

Both are probably true to some degree. The Bandwidth data (26% adoption) suggests real momentum. The community data suggests that momentum isn't self-reinforcing the way WhatsApp's has been.

The Opportunity

The brands that build RCS capabilities now have the chance to define the playbook rather than follow one.

There's no established thought leader on RCS marketing operations — no one who has published detailed learnings about what works and what doesn't at the brand level. The carriers have their positions. The CPaaS platforms have product pages. But the practitioner's guide to running RCS marketing campaigns at scale doesn't exist yet.

The ecosystem gap is real. But gaps are where opportunities live.

RCS X is building the testing and validation layer the ecosystem currently lacks — the infrastructure for brands to validate their RCS experiences before they go to market, across devices and carriers, with confidence.

That's the missing piece. And it's why this data matters: it tells us where we are, so we know where to build.

Sources

- GitHub REST API (repository counts, stars, update dates) — March 2026

- Reddit REST API (member counts, post activity) — March 2026

- Stack Exchange API (question activity, view counts) — March 2026

- HN Algolia API and site search — March 2026

- Twilio.com documentation (WhatsApp, RCS) — March 2026

- Vonage.com developer documentation — March 2026

- Bandwidth.com product pages — March 2026

- Bandwidth State of Messaging Report 2026

- Industry news: MENAFN, CXMToday, TechCrunch, 9to5Mac, MacRumors (March 2026)

MCP Per-Message Authentication Patterns for Production RCS Agents

The security gap in MCP-connected RCS messaging agents — and the hardening patterns that close it.

Introduction

The MCP security crisis is accelerating. In 60 days, the Model Context Protocol ecosystem has logged 30 CVEs — with 36% of deployed MCP servers running zero authentication. That's not a theoretical risk. That's a production emergency.

But here's the conversation nobody's having: what happens when MCP connects to messaging infrastructure?

If you're building RCS messaging agents that use MCP as their orchestration layer, the per-message authentication gap becomes a compliance issue. Brand-validated content flowing through carrier networks needs auditability. MCP's session-based model doesn't natively provide that.

This post breaks down the attack surface, hardening patterns, and what teams need to implement before going to production.

The Problem: Why Per-Message Auth Matters for RCS

MCP's Session-Based Model

MCP tools operate on a session authentication model. Once connected, any tool call within the session passes through. The auth happens at session start — not per request.

This works fine for many use cases. But for RCS messaging, it creates a gap:

- Session hijacking: If a session token is compromised, every message in that session is exposed

- Payload tampering: No per-message integrity check means requests can be modified after the session authenticates

- Compliance risk: Brands sending through carrier networks need to prove message integrity — MCP's model doesn't support this natively

The Numbers

- 30 CVEs in 60 days in the MCP ecosystem

- 36% of MCP servers running with zero authentication

- Per-message auth gap confirmed across Microsoft AutoGen, LangChain, and Vercel AI GitHub issues

Attack Surface Analysis

Session Hijacking Scenarios

- Token exfiltration: Session tokens stored in logs or memory dumps

- Man-in-the-middle: Unencrypted traffic interception during session establishment

- Cross-site replay: Valid session tokens reused from cached requests

Payload Tampering

MCP tool calls pass JSON payloads. Without per-message signing, an attacker who gains session access can:

- Modify message content after validation

- Inject additional recipients

- Alter metadata (timestamps, sender IDs)

Carrier Accountability

RCS messages travel through carrier infrastructure. Brands are accountable for content that enters that network. MCP's session auth provides no mechanism to:

- Prove a specific message wasn't tampered with

- Demonstrate chain of custody for audit purposes

- Verify the request originated from the expected agent

Hardening Patterns That Work

1. Session Token Rotation

What: Short-lived tokens with frequent refresh cycles.

// Rotate session tokens every 5 minutes

const SESSION_TTL_MS = 5 * 60 * 1000;

const lastRotation = Date.now();

const needsRotation = Date.now() - lastRotation > SESSION_TTL_MS;

Why it helps: Limits exposure window. Even if a token is compromised, it's only valid for a short window.

RCS-specific: For high-volume messaging agents, rotate between message batches, not just by time.

2. HMAC Request Signing

What: Add a cryptographic signature to each message payload, independent of the MCP session.

// Sign each message payload with a secret key

const signPayload = (payload, secret) => {

const hmac = crypto.createHmac('sha256', secret);

hmac.update(JSON.stringify(payload));

return hmac.digest('hex');

};

Why it helps: Even with session access, tampering is detectable because signatures won't match.

RCS-specific: Include RCS-specific fields (destination address, brand ID) in the signed payload to prevent redirect attacks.

3. Tool Permission Tiers

What: Separate MCP tools into read-only and write permissions. Messaging tools require elevated permissions.

const TOOL_PERMISSIONS = {

read: ['get_conversation_history', 'get_contact_info'],

write: ['send_rcs_message', 'send_rich_card'],

admin: ['update_brand_settings', 'manage_webhooks']

};

Why it helps: Limits blast radius. Compromised session can only access permitted tools.

RCS-specific: Carve out separate tool permissions for different message types — transactional vs. marketing vs. authentication messages.

4. Audit Trail Requirements

What: Log every message with session ID, timestamp, payload hash, and signing key ID.

const auditLog = {

timestamp: new Date().toISOString(),

sessionId: context.sessionId,

payloadHash: sha256(payload),

signerKeyId: context.activeSigningKey,

toolName: context.toolName,

destination: payload.destination

};

Why it helps: Provides evidence chain for compliance audits. Proves messages weren't modified.

RCS-specific: Retain logs for carrier audit requirements (typically 90+ days).

RCS-Specific Considerations

Brand vs. Carrier Accountability

When a brand sends an RCS message, both the brand and the carrier have accountability:

- Brand: Responsible for content and consent

- Carrier: Responsible for delivery and spam filtering

MCP hardening helps brands maintain the accountability chain on their side. If a dispute arises ("this message was sent without our consent"), signed payloads prove the request came from the expected agent.

Rich Media Content Integrity

RCS rich cards, carousels, and AI suggestions include visual assets (images, CTAs). These need integrity verification too:

const signRichMedia = (card, secret) => {

const mediaHash = sha256(card.imageUrl + card.imageData);

return signPayload({ ...card, mediaHash }, secret);

};

Compliance Documentation

Enterprise deployments typically need:

- Security architecture diagram

- Key rotation policy

- Incident response procedures

- Audit log retention policy

Implementation Checklist

Session Governance

- Session tokens expire within 5 minutes

- Token rotation happens automatically

- Sessions are invalidated on error conditions

- No session tokens in logs or error messages

Request Signing

- Every message payload is HMAC-signed

- Signature includes destination and brand ID

- Signatures are verified before sending

- Invalid signatures trigger alerts

Permission Tiers

- Read vs. write tool separation implemented

- Elevated permissions require additional auth

- Permission denials are logged

- Least-privilege default for new sessions

Testing

- Simulated session hijacking detected

- Payload tampering detected

- Permission escalation blocked

- Audit logs capture required fields

What Comes Next

MCP Spec Evolution

The MCP community is aware of the per-message auth gap. Proposals for native per-message authentication are under discussion. Until then, hardening patterns are the responsibility of the implementing team.

Native Per-Message Auth

Future MCP versions may include:

- Built-in payload signing

- Per-request authentication tokens

- Native audit log support

Security Best Practices

The 30 CVEs in 60 days is a wake-up call. Teams deploying MCP in production need to:

- Assume zero trust by default

- Add layers on top of the MCP session model

- Test security assumptions, not just functional behavior

Related Topics

- MCP Messaging Provider Comparison 2026

- The MCP Security Readiness Gap for RCS Business Messaging

- The Launch-State Governance Gap in RCS

Building secure RCS messaging agents? Follow along for more implementation guides and security patterns.

Building RCS-Enabled AI Agents: Why RCS is Now the Best Option for Communicating Agents

The 2026 developer's guide to building communicating AI agents. Part 1 of 3: Why RCS has become the top choice.

Part 1 of 3: Why RCS is Now the Best Option for Communicating Agents

Introduction

The AI coding agent revolution is here. Tools like Claude Code, Cursor, and OpenAI Codex have captured the imagination — and workflows — of developers worldwide. Claude Code alone has achieved 46% developer preference as the most loved AI coding tool of 2026.

But here's what's happening in the trenches: developers aren't just building code. They're building communicating agents — AI systems that need to interact with users through messaging channels.

And the question we're seeing everywhere is: "How do I add RCS to my AI agent?"

After analyzing the landscape, here's the 3-step framework every developer needs:

- Build → Choose the right channel

- Choose → Select your CPaaS vendor

- Test → Validate before production

Today, we're diving into Step 1: Why RCS is now the best option for communicating agents.

The Communicating Agent Paradigm Shift

AI agents have evolved beyond code generation. They're becoming:

- Customer service representatives handling support conversations

- Sales development bots qualifying leads via chat

- Notification systems delivering proactive updates

- Transaction assistants processing requests end-to-end

Each of these requires a messaging channel to communicate with users.

Channel Options in 2026

| Channel | Rich Media | Verification | App Required | Global Reach |

|---|---|---|---|---|

| SMS | ❌ | ❌ | ❌ | ✅ |

| ✅ | ✅ | ✅ | ✅ | |

| Telegram | ✅ | ⚠️ | ✅ | ✅ |

| RCS | ✅ | ✅ | ❌ | Growing |

Why RCS is Now the Best Option

1. Rich Media Without the App Download

This is the game-changer. RCS delivers rich cards, carousels, AI suggestions, and visual branding directly in the native messaging app. No app installation required.

Users already have Google Messages (Android) or iMessage (iOS with RCS support). Your agent's rich media just... works.

Compare to WhatsApp: Users must download WhatsApp Business, opt-in, and maintain the conversation within that ecosystem. Friction = drop-off.

2. Carrier Verification (The Green Tick)

RCS offers brand verification — users see the verified business logo and name. This builds trust instantly.

In an era of AI-powered phishing and spam, this verification is becoming essential. Users are trained to look for the green tick.

3. 3-7x Higher CTR Than SMS

Bandwidth's 2026 State of Messaging report confirms: RCS achieves 3-7x higher click-through rates than SMS for equivalent messages.

Why? Rich cards with images, buttons, and clear CTAs. Plain text SMS can't compete.

4. No App Switching

Users don't leave their messaging app. The conversation stays where it started. This means:

- Higher engagement

- Better conversation continuity

- Lower abandonment rates

5. Cost Efficiency at Scale

RCS is priced similarly to or lower than SMS for equivalent reach, but delivers much higher engagement. The cost per meaningful interaction is actually lower than SMS.

6. Growing Global Coverage

| Region | Status |

|---|---|

| United States | Major platforms approved (Twilio, Bandwidth, Tells) |

| India | Google + Airtel anti-spam collaboration |

| Europe | Twilio + KPN nationwide rollout |

| Southeast Asia | Expanding |

2026 is the year US RCS goes mainstream.

When to Choose RCS

Ideal for:

- Brands with Android user base

- Transactional messaging (orders, reservations, alerts)

- Marketing campaigns requiring visual appeal

- Enterprise brands needing verification

When to fallback:

- 100% iOS user base (iMessage RCS still maturing)

- Ultra-low-latency requirements

- Markets where RCS isn't available

What's Next: Choosing Your CPaaS

Now that you've decided RCS is the right channel, the next question is: Which CPaaS vendor should you use?

In the next post, I'll break down the major players — Twilio, Infobip, Bandwidth, Sinch — and help you choose based on your use case, scale, and timeline.

Stay tuned for Part 2: The CPaaS Vendor Comparison Guide.

Related Topics

- MCP Messaging Provider Comparison 2026

- The SMS to RCS Migration Playbook

- RCS Analytics: Optimizing Your Campaigns

This is Part 1 of our 3-part series on building RCS-enabled AI agents. Follow along for the complete roadmap.

The SMS to RCS Migration Playbook: Why 2026 is the Year Brands Make the Switch

A practical framework for brands transitioning from SMS to RCS business messaging in 2026.

The writing is on the wall: 26% of brands are already sending RCS, and more than twice that are preparing to make the switch. If you're still relying solely on SMS, here's what's changing — and how to migrate without breaking your existing workflows.

The Data Speaks — RCS Adoption Reaches Critical Mass

The Bandwidth State of Messaging 2026 report confirms what we're seeing in the market: RCS has crossed the chasm. Twenty-six percent of brands already have RCS live in production, and another 2x are actively preparing their migration paths.

This isn't early-adopter hype. This is mainstream momentum driven by three converging factors:

- Device penetration — Google Messages now ships RCS-ready on most Android devices in the US and Europe

- Carrier support — Tells became one of the first US platforms approved for RCS Business Messaging this month

- Brand demand — Customers are demanding richer, more secure communication channels

The key insight? Early adopters are seeing 4-5x higher click-through rates compared to SMS. The question isn't IF RCS becomes the standard — it's how fast you can adapt.

What You're Losing by Sticking with SMS

SMS served us well for three decades. But the economics are shifting:

- Character limits force multi-message threads that frustrate customers

- No rich media means no product visualization, no carousel catalogs, no interactive buttons

- Rising costs at scale as carriers increase per-message fees

- Security gaps create phishing and spoofing concerns that damage trust

- Perception problems — customers increasingly see SMS as "2005 technology"

The real cost isn't the per-message fee. It's the lost conversion opportunities when your message looks generic in a sea of plain text.

The 5 Key Advantages of RCS Over SMS

- Rich media — Carousels, images, video previews, and interactive buttons that make messages look like mobile app experiences

- Verified sender badges — Trust signals that prove your brand is legitimate

- Built-in analytics — Read receipts, engagement metrics, and conversion tracking

- No limits — No character caps, no message count restrictions

- AI-native architecture — Easy integration with AI agents for automated conversations

The Migration Framework — 4 Phases

Phase 1: Audit & Strategy (Week 1-2)

- Inventory your current SMS use cases

- Identify highest-value migration candidates (customer service notifications, order updates)

- Define success metrics (engagement rate, conversion rate, cost per message)

Phase 2: Technical Setup (Week 3-4)

- Choose your CPaaS provider (Twilio, Infobip, Sinch, Bandwidth)

- Set up your RCS business profile with verified branding

- Configure SMS fallback for non-RCS devices

Phase 3: Content Migration (Week 5-6)

- Rebuild your top 3 SMS flows as RCS rich cards

- Test on real devices (Android Google Messages, iOS when available)

- Validate fallback behavior works correctly

Phase 4: Launch & Iterate (Week 7+)

- Soft launch to a subset of your audience

- Measure engagement against your SMS baseline

- Scale based on performance data

Common Pitfalls and How to Avoid Them

- "We'll just send the same message to everyone" — Personalization is the entire point of RCS. Don't waste the opportunity.

- "Skip testing on real devices" — Device fragmentation is real. What renders on Pixel might break on Samsung.

- "RCS replaces SMS entirely" — Always maintain SMS fallback. Not all devices support RCS yet.

- "Set it and forget it" — Continuous optimization is required. Monitor metrics and iterate.

The AI Integration Opportunity

The biggest opportunity isn't just rich media — it's combining RCS with AI agents for automated, personalized conversations at scale:

- Product catalogs inside the chat

- Real-time personalization based on user data

- Infobip + Vertex AI and similar integrations are making this easier

Why does this matter? You can now compete with app-like experiences without requiring customers to download anything.

Your Migration Checklist

- Audit current SMS volume and use cases

- Identify 1-2 pilot use cases for RCS

- Select CPaaS provider

- Set up RCS business profile

- Build fallback SMS strategy

- Test rich content on real devices

- Define engagement KPIs

- Plan launch timeline (8-12 weeks typical)

Ready to Migrate?

The migration isn't as hard as it seems — but it does require a plan. Start with one use case, test in sandbox, and iterate based on real engagement data.

The brands making the switch now are seeing the payoff. Don't get left behind as your customers migrate to RCS-capable devices and expect richer experiences.

Ready to test your RCS campaigns before launch? RCS X gives you instant access to a production-like emulator, event console, and payload validator. Prototype faster, test deeper, and ship with confidence.

The US RCS Floodgates Are Opening: What Brands Need to Know

Tells became one of the first US platforms approved for RCS Business Messaging. This marks a turning point for US brand adoption — here's what it means for your strategy.

More US platforms means faster time-to-market — but also fragmentation risk. Here's how to prepare.

Just got word: Tells became one of the first US platforms approved for RCS Business Messaging this week.

This is a big deal.

For years, RCS adoption in the US has been held back by limited carrier support, complex approval processes, and a lack of trusted platforms. While Europe and Asia surged ahead with RCS-powered customer engagement, US brands waited.

Now we're seeing the pieces fall into place.

The US RCS Landscape Before Now

The US market has lagged behind Europe and Asia in RCS adoption for several key reasons:

- Carrier fragmentation — Unlike Europe where single carriers dominate markets, US mobile carriers operate in a fragmented landscape with varying levels of RCS readiness

- Complex approval processes — Brands seeking to launch RCS campaigns faced lengthy carrier negotiations with no clear timeline

- Limited vendor options — Without approved platforms, brands had to go carrier-direct — a high-barrier approach

Meanwhile, in Europe, RCS adoption surged. In Germany alone, 80%+ of smartphone users are RCS-eligible. Asian markets like Japan and South Korea have been RCS leaders for years.

What the New Approvals Mean

When a platform gets approved for RCS Business Messaging, it means they've met Google's carrier requirements and can serve as an intermediary for brands wanting to send RCS messages.

This is different from carrier-direct integration:

- Platform-approved routes skip the carrier negotiation queue

- Unified APIs work across multiple carriers through a single integration

- Faster onboarding — brands can go live in weeks, not months

Tells joining the approved list signals that more US-focused platforms are getting ready. Expect Twilio, Infobip, Sinch, and Bandwidth to expand their US RCS offerings throughout 2026.

The Competitive Implications

Here's what this means for brands:

1. Faster Time-to-Market

Approved platforms mean you skip the carrier negotiation queue. Instead of 6-12 months of carrier conversations, brands can go live in weeks.

2. More Vendor Options

Competition drives innovation and better pricing. You'll have more choices for RCS implementation — from pure-play CPaaS providers to integrated customer engagement platforms.

3. US Market Credibility

When US platforms invest in RCS, the channel becomes legit. Marketing leaders can confidently add RCS to their channel mix without worrying about vendor viability.

4. Fragmentation Risk

With more platforms comes more choice — but also potential fragmentation. Different platforms may support different features, pricing tiers, or carrier coverage.

The Strategic Imperative

Here's the catch: with more platforms comes more fragmentation.

Your RCS strategy needs to be platform-agnostic. The brands winning today are building on abstraction layers — so they can switch providers without rewriting campaigns.

Key recommendations:

- Build on abstraction layers — Use provider-agnostic APIs where possible

- Start testing now — Don't wait for the "perfect" moment

- Evaluate vendors on carrier coverage — Not all platforms have equal US reach

- Plan for portability — Ensure your campaigns can migrate between providers

What's Next

Expect more US platform approvals throughout 2026. As the ecosystem matures:

- More brands will launch RCS campaigns

- Competition will drive pricing down

- Feature parity will improve across vendors

- Cross-carrier reliability will stabilize

The question isn't "if" RCS goes mainstream in the US.

It's whether you'll be ready when it does.

Related Resources

- MENAFN: Tells RCS Business Messaging Approval

- Bandwidth State of Messaging Report 2026

- CXMToday: Twilio KPN RCS Rollout

Sources: MENAFN (March 13, 2026), CXMToday (Twilio+KPN RCS), TechCrunch (Google+Airtel India spam), 9to5Google (March 2026 features), Bandwidth State of Messaging 2026

Want to prepare your brand for the RCS wave? Start testing at RCS X

Why Brands Are Racing to RCS in 2026 — And Why Most Are Failing at AI Integration

The infrastructure is ready. The carriers are on board. But the AI agent integration layer is missing — and it's costing brands precious time in the market.

The fix? Build your AI agent integration strategy BEFORE you launch RCS.

The infrastructure debate is over. The carriers are on board. Google and Apple both support it. The only question left: are you ready?

Gartner predicts advanced messaging (RCS, WhatsApp) will overtake SMS for customer service by 2028. RCS business messaging traffic grew 550% in 2024 alone — following 358% growth in 2023. Apple RCS support since iOS 18 means universal reach on modern smartphones. In Germany alone, 80%+ of smartphone users are RCS-eligible.

26% of brands are already sending RCS. More than twice that are preparing.

But here's what most brands are missing.

The AI Integration Imperative

They're building RCS strategies around broadcast messages. They're not ready for conversational AI at scale.

Gartner: Organizations leveraging AI in messaging will see 50% more engagement and ROI by 2029. AI + RCS means product finders, automated booking, lead qualification — all inside native messaging.

Yet four hidden gaps are blocking most brands from executing.

The Four Hidden Gaps

1. Multi-Channel Simulation Failure

Your agent passes tests but fails in production. The same agent logic yields 73% success in voice vs 61% in chat. Channel-specific rendering breaks flows in ways testing can't predict.

2. MCP Session Governance

Connecting AI agents to RCS via MCP is the modern approach. But session expiry without auto-recovery (see OpenAI Codex issue #13969), tool duplication in multi-turn sessions, and no standardized reconnection pattern for production workflows create reliability risks.

3. Cross-Channel Parity

Voice, chat, SMS, RCS — same agent, different failure modes. Rich media rendering differs across devices and carriers. Teams ship "lowest common denominator" experiences to avoid breakage.

4. Pre-Launch Validation

Rich cards, carousels, AI templates render differently across devices. There's no way to validate before carrier launch. Teams discover rendering issues post-launch — that's when customer complaints roll in.

The Competitive Window

Early RCS adopters are capturing 3-7x higher CTRs. Brands with AI-powered flows see 58% messaging resolution rate vs voice. Companies with multi-channel strategies serve 73% of customer journeys that span multiple channels.

2026 is the critical window to establish RCS channel authority. In 2027, saturation begins. By 2028, differentiation gets much harder.

The question isn't whether to adopt RCS. It's whether you'll be ready when your competitors are.

Related Resources

- Bandwidth State of Messaging Report 2026

- Hello Charles: 9 Reasons Why Brands Are Switching to RCS

- Hamming AI: Multi-Modal Testing Data

- RCS X: Validate your AI agent integration before going live

Sources: Gartner (January 2026), Sinch RCS Benchmarks, Infobip Messaging Trends 2025, Bandwidth State of Messaging 2026, Hamming AI Multi-Modal Testing Data, GitHub issues (OpenAI Codex, n8n, Cloudflare Agents)

Ready to close your AI integration gaps? Start testing at RCS X

The CPaaS RCS Gap: Why AI Agent Developers Are Stuck

Enterprise Connect 2026 was all about AI Agents. But RCS — the most promising messaging channel for AI agents — was conspicuously absent from major CPaaS announcements. Here's why that matters.

The AI Agent wave is here. But the messaging layer that could make them powerful is being ignored.

The Enterprise Connect 2026 Narrative

The big CSPs just wrapped up Enterprise Connect 2026 with one clear message: AI Agents are the future.

Infobip announced AgentOS. Vonage won Best of Show for Contact Center AI. Twilio showcased their agentic AI platform. Every booth, every keynote, every hallway conversation centered on one theme: building autonomous AI agents for customer service, sales, and beyond.

But here's what's curious: RCS was barely mentioned.

Not a single major CPaaS announcement about RCS business messaging. No new partnerships. No product launches. No "we're investing heavily in RCS" soundbites.

That's curious because RCS is arguably the most promising messaging channel for AI agents. It offers branding, security, rich cards, and encryption — exactly what enterprise customer service needs.

So why the silence?

The AI Agent Multi-Channel Challenge

Every AI Agent developer faces the same channel decision eventually:

- SMS: Commodity. Low engagement. No branding. Everyone's inbox is flooded.

- WhatsApp: Crowded. Meta-controlled. Good reach, but limited brand differentiation.

- RCS: Branded. Secure. Rich cards, carousels, suggested replies. But... complicated to deploy.

The theory is clear: AI agents need to communicate with users through channels users actually trust and engage with. RCS should be the obvious choice. Rich formatting. Brand verification (the green checkmark). Encryption. Interactive elements.

But the practice is a nightmare.

The Carrier Approval Bottleneck

Here's what deploying RCS actually looks like for an AI Agent developer:

You need carrier approvals — And not just one carrier. To reach users across T-Mobile, AT&T, Verizon, and more, you need approvals from each. Timeline: 8-16 weeks.

You need whitelisted test devices — Unlike API testing where you can spin up a sandbox in minutes, RCS requires actual devices registered with carriers. Good luck debugging issues at scale.

You need pre-launch validation — How does your agent handle fallback to SMS? What's the user experience when RCS isn't available? These are questions you need to answer before launch, but the tools to answer them are limited.

The result? RCS stays on the roadmap. "We'll add RCS later." "After we prove the concept with SMS."

The channel that should be the AI Agent developer's best friend becomes a "Phase 2" item. If it ever gets built at all.

What the CPaaS Players Are Actually Offering

Let's be clear: the CPaaS giants have RCS.

- Twilio: RCS Business Messaging GA since September 2024. Solid product.

- Infobip: RCS solution available. Strong carrier relationships.

- Vonage: RCS integrated into their platform.

- MessageBird, Sinch, Bandwidth: All offer RCS.

But here's the pattern: they're not talking about it.

At EC2026, the narrative was entirely AI Agents. RCS was mentioned in passing, if at all. The CPaaS giants are choosing to position themselves as "AI Agent platforms" rather than "messaging platforms."

Why? A few possibilities:

- AI Agents are hotter right now — Easier to sell, more buzz, bigger deals.

- RCS carrier complexity is a hard sell — "8-16 weeks for approval" doesn't fit the "move fast and break things" startup mindset.

- They're waiting for US RCS to mature — The US market still has coverage gaps. Why push hard when the network isn't ready?

Whatever the reason, the result is the same: AI Agent developers who want RCS are on their own.

The Validation Layer Opportunity

This is where we see a gap emerging.

AI Agent developers need to test RCS conversations at scale before carrier approval. They need:

- Simulation of RCS rich cards and carousels

- QA workflows for agent-to-user conversations

- Pre-production validation of fallback behavior

- Device fragmentation testing (Samsung vs. Pixel vs. iOS)

These are the problems RCS X is built to solve.

But more broadly, this represents a positioning opportunity for the ecosystem. Someone will own the "validation layer" for AI Agent + RCS deployment. Right now, no one is claiming that space.

The CPaaS players are busy with AI. The carriers are building infrastructure. The developers are stuck waiting.

There's a middle layer missing: the testing and validation infrastructure that lets AI Agent developers confidently add RCS to their multi-channel strategy.

The Strategic Implication

Here's what this means for the market:

The RCS adoption curve will lag AI Agent adoption — AI agents will ship with SMS and WhatsApp first. RCS will come later, if at all.

Early movers who solve testing will win — Developers who can validate RCS conversations pre-launch will have a competitive advantage in customer experience.

The CPaaS giants may regret the gap — When RCS does take off (and it will, as US coverage improves), the players who own the developer experience will win.

The window is open — Right now, the market is unsettled. No one has claimed the "validation layer" position. This is the time to build awareness and trust.

The Question for AI Agent Developers

If you're building an AI Agent today, here's the question to ask:

How are you planning to test your RCS deployment before going live?

If the answer is "we'll figure it out during carrier approval" — that's a risk. 8-16 weeks of waiting to discover fundamental UX problems isn't agile.

If the answer is "we don't have a plan yet" — that's an opportunity. There's a better way to validate RCS conversations at scale, independent of carrier approvals.

The AI Agent revolution is here. RCS is the messaging channel that could make those agents powerful. But the bridge between them — testing, validation, confidence — is still being built.

Who's going to build it?

Are you an AI Agent developer dealing with RCS testing challenges? We'd love to hear your experience. Reach out or share in the comments.

#AIAgents #RCS #CPaaS #EnterpriseConnect #Messaging #Testing #Deployment

RCS API Rate Limits: The Hidden Blocker Costing Brands (and AI Agents) Launch Time

You've built a flawless RCS campaign for your AI agent. Brand assets approved. Agent logic refined. Then your API calls start returning 429s. Here's how to design for rate limits from day one.

The fix? Design for rate limits BEFORE you launch.

The 429 That Killed the AI Agent Launch

You've built a flawless RCS campaign for your AI agent. Brand assets approved. Agent logic refined. Your conversational AI is ready to handle customer service at scale.

Then your RCS API calls start returning 429s.

Your account gets flagged. Days of debugging begin. And suddenly that "AI agent launch" becomes a week of rate limit roulette.

This is happening to more teams building agentic customer service than you'd think.

The RCS API rate limit problem is real, and it's blocking AI agent launches.

Understanding the RCS Rate Limit Landscape for AI Agents

What's different about RCS vs. SMS/MMS rate limits? Let's break it down.

The Three Limit Types

RCS APIs typically enforce three types of limits:

- Per-second limits — How many requests you can make in a single second

- Per-minute limits — Cumulative requests allowed per minute

- Per-day limits — Total daily API call quotas

Why Carriers Create Hidden Ceilings

Google's guidelines are a baseline. But each carrier—Verizon, AT&T, T-Mobile—applies different filters on top. What works for one carrier might trigger blocks from another.

The Test Agent vs. Verified Brand Disparity

This is critical: test agents face different (often lower) rate limits than verified brand accounts. Documentation is sparse, and many teams discover this only after hitting blocks during launch.

Why AI Agents Burn Through Limits Faster

Unlike human-driven campaigns, AI agent workflows can trigger hundreds of API calls in minutes when handling concurrent conversations. One customer service agent handling 50 simultaneous users can quickly exceed limits designed for batch campaigns.

Why AI Agent Conversations Are Hitting Limits Harder

Rich Media = Higher API Cost

Carousel cards, images, and AI-generated suggestions consume more API bandwidth than plain text. Each rich card requires multiple API calls for uploading media, creating the message, and configuring layout.

The Hidden Cost of AI-Suggested Replies

When your AI agent suggests replies or generates dynamic content, each suggestion adds payload size. Carriers render these differently, and the API treats complex messages as higher-cost.

MCP Server Call Patterns

If you're using Model Context Protocol (MCP) servers for your AI agent (Infobip, Sinch, or others), they're making RCS API calls alongside your agent logic. These compound quickly.

The Agentic Rate Limit Architecture Playbook

The teams launching AI agents fastest? They're building rate limit strategy INTO their agent architecture from day one.

4.1 Message Queuing for Agent Conversations

Build a buffer between your AI agent and the RCS API:

- In-memory queues (Redis, Memcached) for high-throughput scenarios

- Persistent queues (RabbitMQ, SQS) for reliability

- Throughput controllers that respect carrier limits

// Example: Simple rate limiter for agent messages

const queue = [];

const RATE_LIMIT = 10; // messages per second

setInterval(() => {

if (queue.length > 0 && currentRate < RATE_LIMIT) {

sendRCSMessage(queue.shift());

}

}, 1000 / RATE_LIMIT);

4.2 Exponential Backoff with Jitter

Simple retries fail because they bunch up. Add jitter to spread out retry attempts:

async function sendWithBackoff(message, attempt = 0) {

const baseDelay = 1000;

const maxDelay = 30000;

const jitter = Math.random() * 1000;

const delay = Math.min(baseDelay * Math.pow(2, attempt) + jitter, maxDelay);

try {

await rcsClient.send(message);

} catch (error) {

if (error.code === 429 && attempt < 5) {

await new Promise(r => setTimeout(r, delay));

return sendWithBackoff(message, attempt + 1);

}

throw error;

}

}

4.3 Priority Lanes for Agent Traffic

Not all agent messages are equal. Separate by priority:

- Transactional AI responses (high priority) — User queries, order status, account info

- Promotional messages (batchable) — Marketing, updates, newsletters

- System messages (lowest priority) — Logs, diagnostics, non-urgent notifications

4.4 Pre-launch Load Testing with Agent Simulation

Test burst patterns, not just average volume:

- Simulate concurrent AI agent conversations

- Test MCP server call volumes alongside RCS API limits

- Validate under realistic peak loads before go-live

Carrier-by-Carrier Limit Breakdown for Agent Deployments

| Carrier | Notes for AI Agent Deployments |

|---|---|

| Verizon | Strict on burst traffic; prefer steady throughput |

| AT&T | Moderate limits; rich media triggers additional checks |

| T-Mobile | More lenient on test agents; verify before production |

| Regional | Often have lower limits; test thoroughly |

Recovery Strategies When Your AI Agent Gets Blocked

Timeline: Why 24-72 Hours Isn't Unusual

Once rate limited, carriers don't always provide instant recovery. Plan for this gap.

What NOT to Do

- ❌ Don't create new agent accounts (compounds the problem)

- ❌ Don't blast through proxies (will get all accounts flagged)

- ❌ Don't keep retrying immediately (extends the block)

Prevention vs. Cure

Build observability into your RCS AI agent stack:

- Monitor API response times and error rates

- Set up alerts for 429 responses

- Have fallback strategies: SMS, WhatsApp, chat

The Future of RCS Rate Limits for Agentic AI

Are Limits Loosening?

Industry trends suggest carriers are gradually increasing limits for verified brand accounts, but AI agent deployments are outpacing these changes.

Google's Potential API Improvements

Watch for 2026 updates to RCS Business Messaging APIs with better rate limit handling and more granular controls.

AI-Driven Traffic Shaping

The solution may be AI itself—intelligent traffic shaping that predicts load and pre-emptively throttles non-critical messages.

Conclusion: Rate Limits Are a Feature, Not a Bug for Agentic AI

The old approach: "We'll figure out the limits after it works." The new approach: "Design for limits, then scale."

As AI agents become the primary interface for customer service, RCS is the secure, branded channel of choice. But agentic reliability requires engineering every layer—including rate limit strategy.

What's your rate limit horror story with AI agents? Drop it in the comments 👇

Related Resources

- Google RCS Business Messaging Best Practices

- Bandwidth State of Messaging Report 2026

- RCS X: Test your agent deployments before going live

Ready to validate your AI agent's RCS strategy? Start testing at RCS X

The Interoperability Readiness Gap in RCS: Protocol Progress Is Outpacing Trust Operations

RCS protocol momentum is accelerating, but many teams still lack cross-channel trust orchestration, telemetry reconciliation, and pre-launch validation needed for reliable AI-agent messaging at scale.

RCS in 2026 is moving from capability headlines to execution reality.

On paper, momentum looks strong:

- Apple is testing end-to-end encrypted RCS flows in iOS 26.4 beta for iPhone↔Android conversations.

- GSMA continues formalizing encryption and interoperability expectations in Universal Profile evolution.

- Large-market anti-spam collaboration (like Google + Airtel) is raising trust and safety expectations for business messaging programs.

For messaging teams, this is real progress. But it also exposes a new bottleneck: interoperability readiness in operations, not just interoperability in protocol specs.

The Gap Hiding Behind Momentum

Most teams can now integrate APIs and send messages. Fewer teams can confidently answer:

- Are trust states consistent across channels and systems?

- Can we reconcile anti-spam and abuse signals in time to protect campaigns?

- Can we validate encrypted, rich, and fallback paths before full-scale launch?

That is the interoperability readiness gap.

Three Failure Patterns We Keep Seeing

1) State Mapping Drift

A message marked “sent” in one platform may appear in a different effective state elsewhere once trust controls, retries, or policy checks are applied.

2) Fragmented Trust Telemetry

Opt-outs, complaint signals, abuse flags, and reputation events often live in separate systems with weak correlation. Teams cannot close the loop quickly.

3) Pre-Launch Validation Debt

Organizations test happy-path flows but skip difficult conditions: fallback behavior, policy edge cases, rendering variance, and channel-state transitions under load.

Why AI-Agent Messaging Feels This First

AI-agent programs increase conversation volume, variability, and speed. That amplifies the cost of interoperability blind spots:

- small state mismatches become campaign-level errors,

- weak trust reconciliation increases suspension/compliance risk,

- delayed validation creates expensive post-launch firefighting.

The Operating Shift for 2026

High-performing teams are moving from “channel enablement” to trust orchestration:

- Instrument shared trust telemetry across systems.

- Add pre-launch simulation for fallback + policy edge paths.

- Enforce approval and policy gates as release controls.

- Feed spam/abuse outcomes back into campaign and agent tuning.

The strategic takeaway: adoption is no longer the main differentiator. Operational trust interoperability is.

If your team cannot simulate and validate trust behavior before launch, you are still shipping critical journeys with limited visibility.

Sources

- 9to5Mac — iOS 26.4 beta adds support for testing encrypted RCS: https://9to5mac.com/2026/02/16/ios-26-4-beta-adds-support-for-testing-end-to-end-encrypted-rcs-messaging/

- MacRumors — iOS 26.4 RCS encryption testing coverage: https://www.macrumors.com/2026/02/16/ios-26-4-rcs-encryption-testing/

- GSMA — RCS encryption and interoperability direction: https://www.gsma.com/newsroom/article/rcs-encryption-a-leap-towards-secure-and-interoperable-messaging/

- TechCrunch — Google + Airtel anti-spam collaboration in India: https://techcrunch.com/2026/03/01/google-looks-to-tackle-longstanding-rcs-spam-in-india-but-not-alone/

Enterprise Connect 2026: AI Agent RCS Execution Readiness Is the Bottleneck

EC2026 confirmed strong AI momentum, but the hard problem is now agent-to-user RCS execution readiness: testing, governance, and reliable launch operations.

At Enterprise Connect 2026, one signal was hard to miss: enterprise teams are accelerating AI in customer conversations.

But the practical blocker is shifting. It’s no longer "Can we build an AI agent?" It’s "Can we launch agent-to-user RCS journeys with confidence before production?"

The Market Signal Is Strong

Vendors are clearly in execution mode:

- Twilio announced its KPN partnership to deliver "nationwide access to RCS for Business in the Netherlands," reinforcing scale readiness in Europe.

- Airtel and Google announced collaboration to "advance spam protection in India with secure RCS messaging," raising trust and safety expectations for business messaging.

- Google RBM keeps shipping platform updates across launch-state and operational controls.

These are strong adoption signals. But adoption momentum also raises the quality bar.

Why AI Agents Make the Gap More Expensive

AI agents compress content and response cycles. That speed is valuable — but it increases launch risk if validation is weak.

Three failure patterns matter most:

Behavior drift at handoff points Agent behavior can look coherent in controlled tests but break at escalation/fallback boundaries.

Channel/runtime mismatch Logic built on web/chat assumptions often fails under RCS delivery realities (timing, retries, rendering variance, approval-state transitions).

Governance lag Teams can ship creative and prompts quickly, while policy controls, approval gates, and auditability lag behind.

The New Differentiator: Execution Readiness

EC2026 conversations suggest the next competitive edge is not raw AI capability. It is operational discipline around launch.

Winning teams are building a repeatable readiness layer that includes:

- pre-launch simulation for agent conversation paths,

- policy and approval gates tied to deployment state,

- fallback and escalation validation,

- and post-launch telemetry loops for rapid correction.

A Practical 30-Day Plan

If you’re shipping AI-agent messaging now, the fastest way to reduce risk is:

- Define a launch-readiness checklist specific to agent-driven RCS journeys.

- Add mandatory pre-launch validation for high-impact intents.

- Enforce policy/approval gates as release controls, not documentation.

- Instrument runtime signals (errors, drop-offs, opt-outs, out-of-order events).

- Run weekly reliability reviews before scaling volume.

Final Takeaway

Enterprise Connect 2026 confirmed AI-agent momentum.

Now the bottleneck is execution readiness on RCS: testing, governance, and reliability before scale.

The teams that win won’t be the ones with the most AI demos. They’ll be the ones that can ship agent-to-user RCS experiences safely, repeatedly, and fast.

Sources

- Twilio + KPN RCS partnership (Mar 4, 2026): https://www.twilio.com/en-us/press/releases/twilio-and-kpn-partnership-unlocks-the-next-generation-of-secure

- Airtel + Google anti-spam collaboration (Mar 1, 2026): https://www.airtel.in/press-release/03-2026/airtel-and-google-collaborate-to-advance-spam-protection-in-india-with-secure-rcs-messaging/

- Google RBM latest releases: https://developers.google.com/business-communications/rcs-business-messaging/whats-new/latest-releases

The Cross-Channel Parity Debt in RCS Agent Launches

RCS infrastructure is maturing fast, but teams still add RCS late and inherit channel-specific drift. The real bottleneck is cross-channel parity debt.

Most teams still launch AI messaging agents in this order: Slack → Discord → WhatsApp… and RCS later.

That sequence feels practical. But it creates a hidden execution tax: cross-channel parity debt.

By the time RCS is added, core agent logic is already tuned to channels with different UX, identity, and delivery behavior. Then the hard failures appear late:

- inbound events don’t behave the same,

- rich cards render differently by device and carrier,

- and governance logic that looked fine in pilot breaks in production.

2026 Signals: RCS Is Maturing Fast

The ecosystem momentum is real:

- Twilio and KPN announced nationwide RCS for Business coverage in the Netherlands.

- Airtel and Google launched AI-powered spam protection collaboration for secure RCS in India.

- Google RBM release updates continue expanding launch-state controls and operational analytics.

- Apple and Google continue encrypted RCS momentum between iPhone and Android.

The market signal is clear: channel readiness is accelerating.

The Real Risk: Workflow Lag

If RCS is treated as a final-mile add-on, teams keep shipping channel-specific patches instead of stable messaging systems.

That creates a brittle operating model:

- one intent behaves differently by channel,

- reliability issues are discovered post-launch,

- and approvals become a proxy for quality even when runtime behavior is drifting.

The Parity-First Operating Model

A stronger approach is to design for parity from day one:

- Separate intent logic from channel formatting

- Simulate inbound edge cases (retry/order/idempotency) before launch

- Validate rich media + fallback across representative device/carrier mixes

- Gate release with policy + approval-state checks, not only creative sign-off

Why This Matters Now

RCS is no longer just a “new channel” decision. It is becoming the stress test for whether your agent operations are production-grade.

The teams that win in 2026 won’t be the fastest to connect channels. They’ll be the fastest to ship consistent behavior across channels.

Sources

- Google RBM latest releases: https://developers.google.com/business-communications/rcs-business-messaging/whats-new/latest-releases

- Twilio + KPN secure RCS rollout: https://www.twilio.com/en-us/press/releases/twilio-and-kpn-partnership-unlocks-the-next-generation-of-secure

- Airtel + Google anti-spam collaboration: https://www.airtel.in/press-release/03-2026/airtel-and-google-collaborate-to-advance-spam-protection-in-india-with-secure-rcs-messaging/

- iPhone/Android encrypted RCS context: https://9to5google.com/2026/02/23/google-messages-encrypted-rcs-iphone/

The Runtime Governance Gap for AI Agents on RCS

AI agents can pass pre-launch QA and still fail in production when policies, launch states, and channel behavior drift. The new bottleneck is runtime governance.

RCS is maturing quickly.

But for AI-agent teams, the hardest problem is no longer launch approval. It is runtime governance: keeping agent behavior policy-safe and performance-stable after launch.

In 2026, platform and ecosystem signals are getting stronger:

- Google RBM added new launch-state transition controls and richer operational telemetry.

- Airtel and Google announced collaboration to reduce spam in India.

- Twilio and KPN expanded secure, enterprise-ready RCS coverage in the Netherlands.

These are important advances. But they do not remove a core risk for AI teams:

An agent that passed QA last week may not stay compliant this week after prompt, workflow, or fallback changes.

Why “Approved” Is Not the Same as “Safe in Production”

Approval is a checkpoint. It is not a guarantee of runtime stability.

AI-agent systems evolve continuously:

- prompts get updated,

- tools and MCP permissions change,

- escalation logic shifts,

- fallback pathways drift by channel/device.

So teams can end up with “approved but unstable” campaigns and conversations.

The New Operating Model: State + Behavior + Trust

For AI-agent-first RCS programs, governance needs to become a loop:

State governance

- Track transition readiness (Pending → Launched → Suspended) as an operational discipline.

Behavior validation

- Re-test multi-turn agent flows before each major update.

- Validate fallback, escalation, and edge-case behavior under realistic conditions.

Trust telemetry

- Feed unsubscribe/spam trends back into pre-launch and pre-release checks.

- Treat trust signals as product inputs, not only postmortem data.

What This Means for Teams

The competitive edge is shifting from:

- “Can we launch RCS?”

to:

- “Can our AI agent stay launched safely while we iterate quickly?”

That requires a validation/testing layer independent of operator approvals—so teams can test policy-sensitive behavior before changes hit production.

Bottom Line

RCS is becoming a serious AI-agent channel.

The winners will not be the teams with the most automation. They will be the teams with the strongest runtime governance loop across policy, behavior, and trust.

Sources

- Google RBM latest releases: https://developers.google.com/business-communications/rcs-business-messaging/whats-new/latest-releases

- Airtel + Google anti-spam collaboration: https://www.airtel.in/press-release/03-2026/airtel-and-google-collaborate-to-advance-spam-protection-in-india-with-secure-rcs-messaging/

- Twilio + KPN secure RCS rollout: https://www.twilio.com/en-us/press/releases/twilio-and-kpn-partnership-unlocks-the-next-generation-of-secure

- iPhone/Android encrypted RCS context: https://www.theverge.com/tech/879792/apple-iphone-android-rcs-messages-end-to-end-encrypted

Enterprise Connect 2026 Preview: 6 Headlines for RCS, RBM, and Agentic Messaging

The six practical headlines to track at Enterprise Connect 2026 if you care about deployable AI messaging, not just conference hype.

Before keynote clips and product screenshots flood the timeline, here are the six headlines that matter most for teams shipping conversational AI across channels.

1) AI is now the default narrative at Enterprise Connect 2026

The market is no longer asking “Should we use AI?”

The question now is: How fast can we deploy AI-powered communications safely and reliably?

2) RCS/RBM is shifting from pilot to operating channel

RCS is moving beyond test budgets and innovation teams. More organizations are treating it as core customer experience infrastructure.

That shift changes everything: ownership models, QA expectations, and performance accountability.

3) Execution risk is the real story behind the hype

Most failures won’t happen in demos. They happen in production paths:

- fallback logic,

- human handoffs,

- channel-specific behavior,

- and edge-case message rendering.

The teams that acknowledge this early will ship faster with fewer surprises.

4) Governance is now non-negotiable

As agentic conversations scale, policy quality becomes as important as model quality:

- consent boundaries,

- escalation routes,

- approval controls,

- and auditability.

Without governance, scale creates risk, not advantage.

5) Pre-launch validation remains the biggest gap

Many teams still cannot reliably simulate and validate real multi-turn journeys before launch.

That means production often becomes the first true QA environment—an expensive way to learn.

6) Cross-functional execution will separate winners from laggards

The strongest programs won’t be tool-centric. They’ll be workflow-centric:

- marketing defines intent,

- engineering validates behavior,

- operations monitors outcomes,

- and all three iterate together.

Bottom Line

Enterprise Connect 2026 will generate plenty of attention.

But the highest signal is operational maturity: who can move from idea → validated journey → reliable rollout with confidence.

That’s the metric that will matter long after the event closes.

Sources

- Enterprise Connect conference tracks and highlights: https://enterpriseconnect.com/speaker-highlights/

- Enterprise Connect 2026 trend framing (TechTarget): https://www.techtarget.com/searchunifiedcommunications/feature/Enterprise-Connect-2026-brings-AI-from-hype-to-reality

- Sinch AI agent engagement announcement coverage: https://ecommercenews.com.au/story/sinch-unveils-ai-agent-tools-for-customer-engagement

- Google RCS Business Messaging docs: https://developers.google.com/business-communications/rcs-business-messaging/

- Google RBM latest releases: https://developers.google.com/business-communications/rcs-business-messaging/whats-new/latest-releases

- Infobip Juniper RCS recognition (ecosystem signal): https://www.businesswire.com/news/home/20260219371852/en/Infobip-Recognized-as-RCS-for-Business-Leader-by-Juniper-Research

The Policy-as-Code Gap in RCS for AI Agents

RCS security and governance controls are improving, but enterprise AI-agent teams still need pre-launch policy simulation to ship safely at speed.

RCS is entering a more mature phase in 2026.

Recent announcements show clear progress in secure business messaging and anti-spam controls. That’s great for enterprise adoption.

But a practical gap remains for teams shipping conversational AI agents:

governance controls are improving faster than pre-launch policy simulation.

The Problem Isn’t Channel Access Anymore

For many teams, the question used to be, “Can we launch RCS at all?”

Now the real question is:

Can we launch safely, repeatably, and at AI iteration speed?

When one agent flow spans RCS, WhatsApp, and chat surfaces, teams must validate more than copy and design:

- Do unsubscribe and consent rules fire correctly?

- Does escalation trigger at the right moments?

- Do fallback paths behave consistently by channel?

- Do device and carrier differences introduce policy edge cases?

Most teams still answer these with manual checklists and ad-hoc QA.

Why Manual QA Breaks for AI-Agent Workflows

AI-agent behavior changes fast. Prompts evolve. Tools update. Decision logic gets tuned frequently.

Manual QA does not scale with that pace. It introduces three risks:

- Coverage gaps — high-risk turns are missed.

- Regression drift — fixes in one flow break another.

- Late discovery — policy issues show up after launch.

The result is a costly cycle: submit, wait, launch, discover, patch, repeat.

A Better Model: Policy-Aware Simulation Before Production

Teams need a validation layer where policy is treated as testable logic.

In practice, that means defining and validating rules for:

- consent and opt-out handling,

- escalation conditions,

- fallback behavior,

- channel-specific constraints,

- and multi-turn safety boundaries.

Then running repeatable pre-launch regression suites across priority scenarios.

This is the shift from “we can send messages” to “we can trust what we ship.”

What Winning Teams Will Do Next

As RCS adoption accelerates, high-performing teams will:

- standardize policy checks as part of release workflows,

- test multi-channel agent journeys before launch,

- and align growth, product, and compliance on a single quality gate.

The competitive edge will not be having more channels.

It will be shipping with confidence at the speed conversational AI now demands.

Sources

- TechCrunch — Google anti-spam in India with Airtel: https://techcrunch.com/2026/03/01/google-looks-to-tackle-longstanding-rcs-spam-in-india-but-not-alone/

- Airtel Press Release — Airtel + Google secure RCS messaging: https://www.airtel.in/press-release/03-2026/airtel-and-google-collaborate-to-advance-spam-protection-in-india-with-secure-rcs-messaging/

- Twilio Press Release — Twilio + KPN secure messaging partnership: https://www.twilio.com/en-us/press/releases/twilio-and-kpn-partnership-unlocks-the-next-generation-of-secure

- Google RBM Latest Releases: https://developers.google.com/business-communications/rcs-business-messaging/whats-new/latest-releases

Post-Launch Signals vs Pre-Launch Certainty in RCS

RCS governance is improving quickly in 2026, but marketing teams still lack pre-launch certainty across device/carrier/channel combinations.

RCS operations are maturing fast in 2026.

Teams now have stronger governance signals than before:

- Carrier approval comments in Google RBM workflows

- New unsubscribe/spam trend fields in RBM analytics

- Broader rollout momentum like Twilio + KPN in the Netherlands

- Stronger anti-spam controls such as Airtel + Google in India

This progress matters. It reduces risk and improves operational visibility.

The Core Gap: Governance Improved Faster Than Predictability

What we keep hearing from operators, marketers, and developer communities is consistent:

- “How do we safely test multi-turn conversations before launch?”

- “How do we avoid channel- and device-specific regressions?”

- “How do we iterate quickly without increasing policy risk?”

In short, teams are often using post-launch analytics to discover pre-launch mistakes.

Why This Hits Growth Teams Hard

When confidence is low before submission, teams become conservative:

- Rich cards and carousels are underused

- QA cycles become manual and slow

- Creative iteration stalls

- Compliance review becomes a bottleneck

The outcome is predictable: slower campaigns, higher launch risk, and avoidable conversion loss.

A Better Operating Model: Add a Pre-Launch Validation Layer

A practical RCS operating model combines both:

Pre-launch validation

- Emulate key journeys and edge cases

- Validate rendering and interaction logic across target scenarios

- Catch breakpoints before carrier submission

Post-launch monitoring

- Track unsubscribe/spam trends

- Monitor performance signals by campaign

- Iterate from real-world results

Both are required. Post-launch metrics show what happened. Pre-launch emulation helps control what happens next.

The 2026 Advantage

As RCS scales, winners won’t be teams with the most templates. They’ll be teams with the fastest safe iteration loop—the ability to test quickly, launch confidently, and learn without avoidable production errors.

That is the operating shift that matters for enterprise RCS programs in 2026.

Sources

- Google RBM Latest Releases: https://developers.google.com/business-communications/rcs-business-messaging/whats-new/latest-releases

- KPN adopts Twilio platform for RCS Business Messaging: https://www.telecompaper.com/news/kpn-adopts-twilio-platform-for-rcs-business-messaging--1564200

- Airtel and Google collaborate to advance spam protection in India with secure RCS messaging: https://www.airtel.in/press-release/03-2026/airtel-and-google-collaborate-to-advance-spam-protection-in-india-with-secure-rcs-messaging/

MWC 2026: What Every Brand Considering RCS Needs to Know

We analyzed 12+ LinkedIn posts from MWC 2026. Every vendor talked about RCS being the future. Nobody mentioned the real problem: brands still can't test their campaigns before launch.

Keywords: MWC 2026, RCS, RBM, vendor insights, testing gap, campaign validation, device fragmentation

#Hashtag1 #MWC #RCS #RBM #Messaging

MWC 2026 is in the books, and the RCS conversation is louder than ever. We analyzed 12+ LinkedIn posts from the industry's biggest names—Infobip, Sinch, HORISEN, iBASIS, and more. Here's what we found.

What Vendors ARE Saying

The dominant narrative at MWC 2026 was clear: RCS is winning.

Infobip showcased their AI-powered RCS partnership with Haas F1 Team, positioning RCS as the future of fan engagement. Their post emphasized "One Communication Platform" messaging and AI integration with Google.

Sinch's Miriam Liszewski captured the optimism: "RCS is the messaging upgrade businesses didn't know they needed" and "It's rich, interactive, secure… and yes fun!"

Carrier partnerships are accelerating. Twilio + KPN announced nationwide RCS Business Messaging in the Netherlands, with iOS support expected in 2026. Airtel + Google launched carrier-level spam protection for RCS in India—463 million subscribers now have verified business identity at the carrier level.

Fabrizio Salanitri from HORISEN, in a Juniper Research interview, framed the industry evolution as "from telecom foundations to AI-powered automation and omnichannel orchestration."

The 11-Year Problem

But not everyone is celebrating.

Andreas Constantinides asked the hard question that no vendor wants to answer: "Is SMS dying?"—11 years after first hearing about RCS at MWC 2015.